I’ve heard from people with severely limited bandwidth to a guy who is deaf/blind and reads the show on his Braille display. Over the years though, I’ve gotten lots of positive feedback for providing the script of my shows. In fact, I don’t know anyone who does it this way. I realized that there are those who would rather read than listen, so why not just give them the full text?īut this process is certainly not for everyone. I even tried writing just bullet points to remind me of what I wanted to say, but I found that I was writing full sentences and then editing them down to bullet points.

While I can sound articulate when speaking on mic to another person, when I’m alone my speech is filled with “ums” and “ahs”.

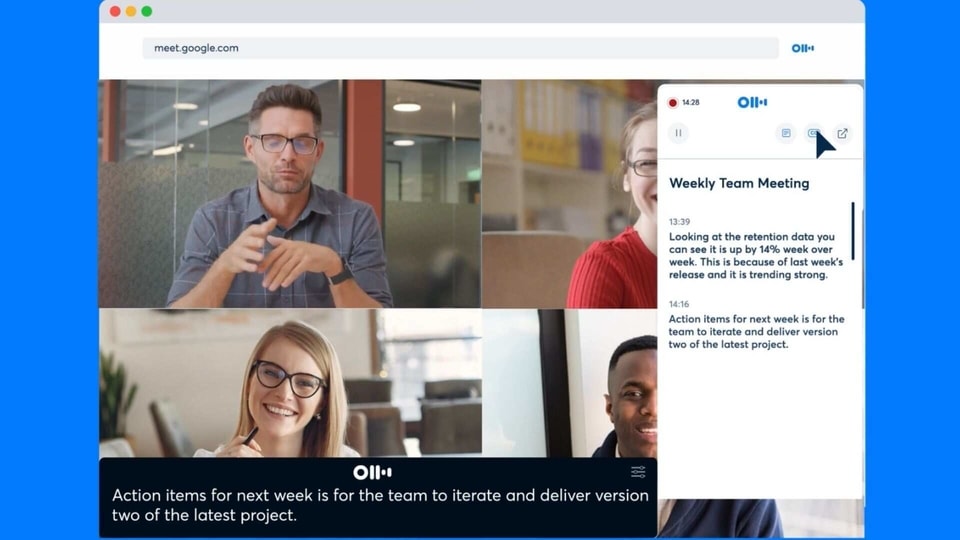

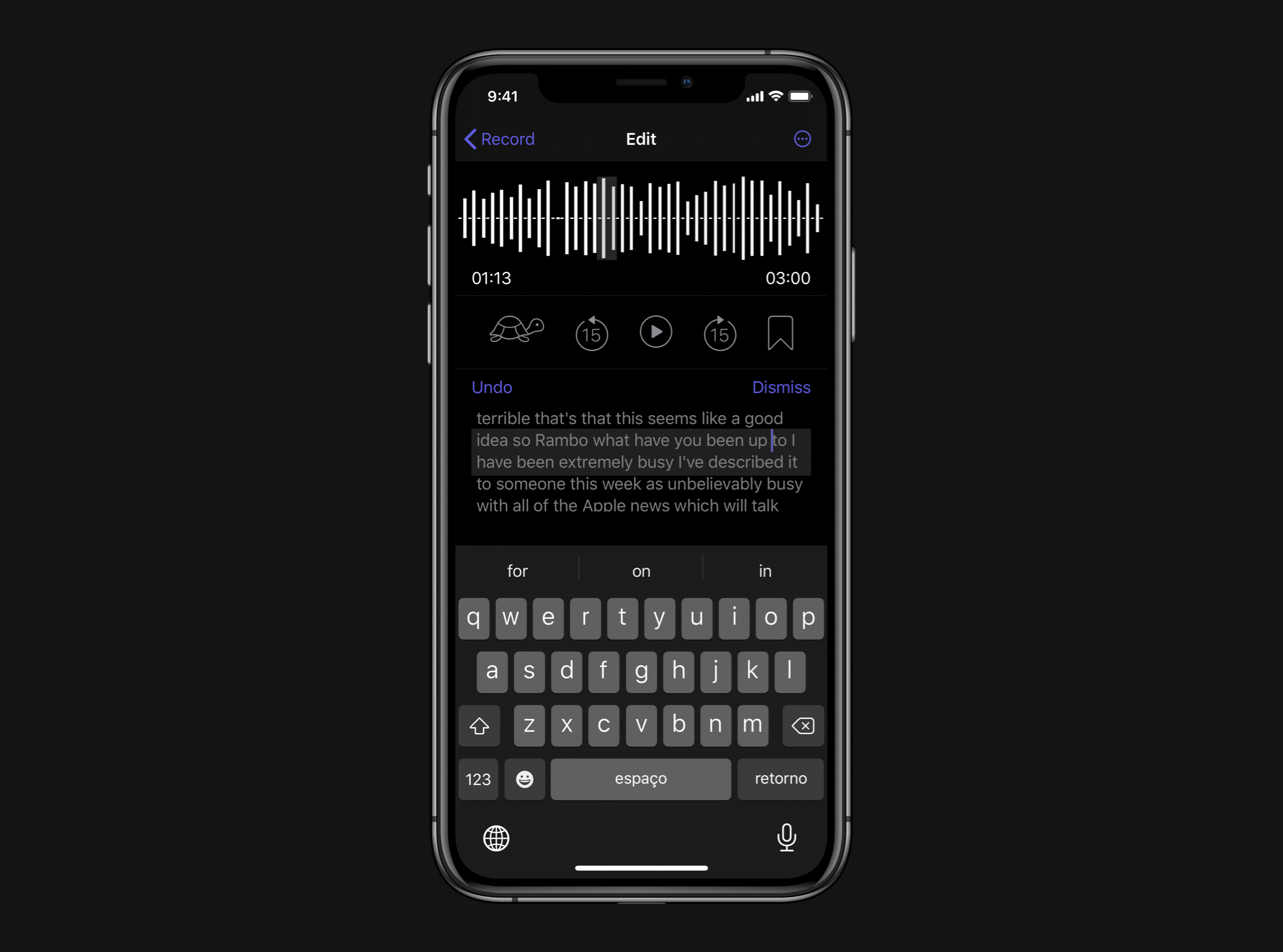

I have found over the nearly 15 years of podcasting that I don’t speak well as a solo speaker without a script. Popular new transcription app Otter raises privacy red flagsĪI breakthrough: Otter.You have probably figured out that I create my content as a podcast and also as a series of blog posts. Transcription service Otter launches enterprise app for teams Zoom meetings: You can now add live captions to your call – and they actually work But if you're already using Otter to keep track of all the useful information in meetings, you can save yourself all the fumbling with your phone at the beginning of a meeting and concentrate on having your conversation. Transcription isn't perfect, and you'll probably still want to take outline notes with your own action items and any key decisions, because what you get in a transcript is a flood of text including every digression and back-and-forth discussion. Both versions of Otter get some words wrong ('currency' instead of 'concurrency') Otter Assistant correctly recognised 'fuzzing', which the phone app mistook for 'buzzing' and 'placing', but the phone app recognised 'of course' correctly instead of suggesting 'at a cost'.

But it didn't always detect the transition from one speaker to another as well the phone app, or catch everything at the beginning or end of what someone said. The Otter Assistant did recognise some voices straight away and that improved quickly as we used it for more transcriptions inside Teams. Image: Mary Branscombe / ZDNetīecause we'd used the iPhone to transcribe previous conversations, speaker attribution was better there at first Otter learns to recognise specific voices and remembers the names you give them, but that will be affected by the microphone and speaker combination on your devices. Otter Assistant for Teams didn't initially recognise speakers as well the iPhone Otter app we'd used for some time the apps also made different mistakes in transcription. But in some cases, the Otter assistant missed a couple of words at the beginning of some sentences that the phone transcript captured. In practice, this mostly produced a more accurate transcription - for example, correctly getting '8 AM' as a time rather than the 'AM AM' we saw in the transcription of the same meeting done with Otter on an iPhone. Theoretically, recording the audio stream directly should give better quality than using a device speaker and microphone. That won't always work for conference events hosted in Teams: for example, the Teams-based Q&A sessions at the recent Microsoft Ignite conference were set to open in the web version and not expose the meeting details in the URL (or show in our Teams calendar), so we couldn't even try to invite the Otter Assistant. Again, you'll have to do it from the Otter dashboard, and you need the URL of the Teams invite link, which includes any meeting password. Unlike the integrated transcription, Otter Assistant isn't restricted to scheduled meetings if you jump into a quick meeting to sort something out, you can still use the assistant.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed